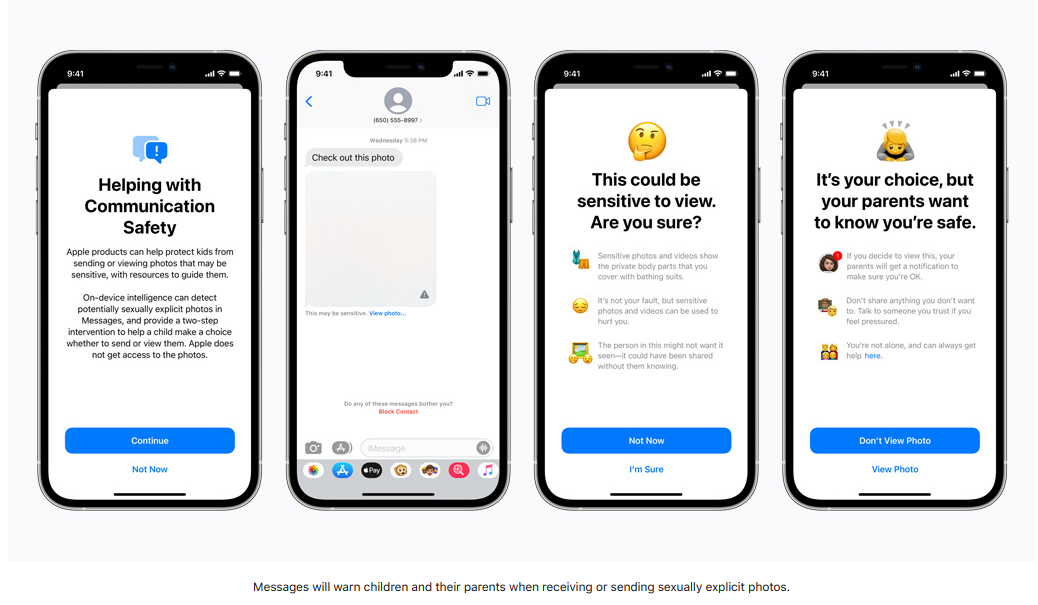

Anushka MehtaCo-Founder, Rightantra Apple Inc.’s controversial announcement in August, 2021 regarding the roll out of new child safety measures on iPhones and other products, saw varied reactions from child rights advocates and privacy experts. It was amidst such mixed reactions, that Apple decided to postpone the release of their measures limiting the spread of Child Sexual Abuse Material (“CSAM”), providing Apple with room to taken into account feedback from stakeholders. For all the android users and others living in their own bubble, Apple proposed to implement tools within their devices to prevent sexual exploitation of children through technological communication. The proposed measures were to be in the content of iMessage communication through pictures and parental control; detection of CSAM on iCloud Photos and lastly, intervention by Siri during web searches related to CSAM. On one hand these measures were lauded as being critical steps in the fight against those trafficking CSAM, however, there has been an equal, if not larger, amount of criticism against the same. While security experts and privacy enthusiasts have appreciated Apple’s underlying well meaning intention, strong concerns about backdoor surveillance as well as undermining the necessity of end-to-end encryption. The reason why this is so shocking is because Apple has always valued the privacy of its users above all, but these proposed measures boil down to the age-old question, whether the privacy of a child or another user can be compromised in the name of protection? Right to Privacy vis-a-vis Child Protection The right to privacy has been recognised as a basic human right in most developed jurisdictions and international treaties. While it is subject to reasonable restrictions, as is the case with most human rights, the protection measures appear to curb the autonomy of children, which is often counterproductive to their safety. This issue stems from Apple’s proposal to blur out pictures on iMessage, if they contain any CSAM, with parental notification which can be enabled in case of children below the age of thirteen years. Research by two psychologists and a computer scientist raises concerns around the parent notification feature since it has traditionally been observed that warnings have a paradoxical effect on adolescents. These studies depicted that those given warnings to take into account the privacy of others before sharing an image were more likely to share said image. Further, concerns about the disastrous repercussions of these measures on children from abusive homes were raised in an open letter signed by organisations such as Electronic Frontier Foundation, Privacy International, Big Brother Watch, etc. It stated that the feature of parent notification presumed a good relationship between the child and adult parent, which is not always the case with children being domestically abused or closeted children from the LGBTQ+ community. Further, rather than a notification that a child below the age of thirteen has viewed a CSAM related image going to the parents of the child, it appears to be more beneficial if Apple were notified instead. On Apple’s part, they have tried to address such concerns as part of their detailed FAQs and other material, whereby it has been clarified that the proposed feature would only be available against messages sent or received containing CSAM on accounts created for children under Family Sharing. Further, Apple would not compromise on end-to-end encryption since it would not have access to the communications, only their technology would evaluate images in Messages and present an intervention if the image is determined to be sexually explicit. Source: Apple Expanded Protection for Children

Secondly, with regard to CSAM scanning on iCloud images as soon as they are uploaded onto the cloud, irrespective of whether done prior or post introduction of these measures. Most experts are concerned with the non-consensual model of this scanning since a majority of Apple users backup data to iCloud. Another major red flag expressed by several individuals and organisations is the expansion of such scanning for other activities such as anti-government sentiments, extremism, etc. along with a Government mandate for all tech companies to comply with such standards. This could lay the foundation for censorship, surveillance and persecution on a global basis. Although whether this is a real concern may be doubted since companies like Google, Microsoft and Facebook already do scan images for CSAM. The foundation for the scanning is the “NeuralHash" system to compare known CSAM images to photos on a user's iPhone before they're uploaded to iCloud. If there is a match, that photograph is uploaded with a cryptographic safety voucher, and at a certain threshold, a review is triggered after which the account shall be disbaled and images reported. However, Apple has allegedly been scanning iCloud mail for CSAM related material since 2019, and thus, this is not a new development. Nonetheless, Apple has provided reassurances that its technology is accurate and would not be susceptible to flagging innocent content as CSAM, since a human review is conducted prior to creation of a report. Further, Apple has time and again reiterated its stance to refuse any demands by Governments to include non-CSAM hashes in its system. Conclusion In light of the above concerns raised and subsequent affirmations made by Apple, it appears that Apple users will be playing a waiting game until more clarity is provided by Apple. However, one thing cannot be denied, taking steps towards prevention of the spread of CSAM is a noble and necessary requirement for large communications and social media platforms. Unfortunately, there exists a fine line between the right to privacy and protection of children which is difficult to straddle and balance out. Despite being an Android user myself, I cannot help but be compelled to say that if anyone can do it, it would be Apple.

0 Comments

Leave a Reply. |

Categories

All

Archives

December 2021

|